AI-CCORE Blogs

Strix Review: Security Testing for Vibe Coders Who Actually Care

1 Introduction

If you’re vibe coding with AI—shipping features fast, iterating quickly—security testing probably feels like a buzzkill. You’re in flow state, Claude just generated a perfect API endpoint, and the last thing you want is to context-switch into “security mode” and manually test for SQL injection.But here’s the thing: that IDOR in your user API? That XSS in your search bar? They’re shipping to production too.

Strix is an autonomous AI penetration testing framework that finds and validates real vulnerabilities while you keep coding. It’s not a linter that flags “potential issues”. It’s a team of AI agents that actually exploit your app, prove the vulnerability exists, and hand you a working proof of concept. Think of it as your security co-pilot that works in the background while you stay in flow.

2 Why This Matters Now

Yesterday - literally as I’m writing this review – news broke that a hacker used Anthropic’s Claude AI to breach multiple Mexican government agencies. The attacker jailbroke Claude, bypassing safety guardrails to generate exploit scripts and automate attacks. The result: 150 gigabytes of stolen data, including records linked to 195 million taxpayers, voter information, and government employee credentials.

The hacker also attempted to use ChatGPT for lateral movement guidance and detection probability calculations. According to Gambit Security’s research, ChatGPT produced detailed attack plans with ready-to-execute instructions.

This isn’t theoretical anymore. AI-powered hacking is happening right now, in production, against real targets. The tools attackers are using - consumer AI chatbots with jailbroken prompts – are accessible to anyone.

Here’s the uncomfortable reality: if attackers are using AI to find and exploit vulnerabilities, defenders who rely on manual testing or traditional scanners are already behind. The security arms race has entered a new phase where AI capabilities determine who wins.

This is exactly why tools like Strix matter. It’s the same AI-powered approach, but used ethically and proactively. Instead of waiting for an attacker to jailbreak Claude and exploit your app, you use Strix to find those vulnerabilities first. Same techniques, same automation, same AI reasoning - but you’re the one running the test, and you’re the one who gets to fix the issues before they’re exploited.

The choice isn’t whether AI will be used for security testing. That’s already decided. The choice is whether you’re using it defensively or waiting for attackers to use it offensively against you.

3 What Is Strix?

Strix is an open-source security testing framework built on multi-agent AI orchestration. Released in August 2025 by the team at usestrix, it’s licensed under Apache 2.0 and actively maintained on GitHub. The current version is 0.8.2 (as of this review).

The core concept: instead of one AI trying to do everything, Strix creates a graph of specialized agents. One agent maps your attack surface. Another tests for SQL injection. A third validates findings. A fourth writes the vulnerability report. They collaborate, share context, and work in parallel - like a real penetration testing team.

It’s designed for the reality of modern development: you’re building fast with AI assistance, you care about security, but you don’t have time (or budget) for manual pentests on every sprint.

4 Key Features

4.1 Graph of Specialized Agents

The architecture is genuinely clever. Strix creates a tree of agents, each with a specific job:

• Root agent coordinates the overall scan

• Discovery agents map attack surfaces (subdomains, endpoints, code structure)

• Vulnerability-specific agents test for SQLi, XSS, IDOR, etc.

• Validation agents prove findings are real (not false positives)

• Reporting agents document confirmed vulnerabilities

• Fixing agents (white-box only) patch code and verify the fix works

Each agent gets loaded with “skills”—markdown files containing deep expertise on specific vulnerability types. Strix includes 17 vulnerability-specific skills covering everything from IDOR (213 lines of advanced techniques) to SQL injection (190 lines covering union-based, blind, error-based, time based, and ORM bypass methods). This isn’t surface-level scanning. Agents share a workspace and proxy history but have isolated browser and terminal sessions. They work in parallel, which is why deep scans can spawn dozens of agents simultaneously.

4.2 Kali Linux Sandbox with Full Toolkit

Every scan runs in a Docker container based on Kali Linux. The sandbox includes:

• nmap, subfinder, httpx, gospider for reconnaissance

• nuclei, sqlmap, zaproxy, wapiti for vulnerability scanning

• ffuf, dirsearch, katana, arjun for fuzzing and discovery

• Caido proxy (modern alternative to Burp) for traffic analysis

• Playwright for browser automation (multi-tab, handles SPAs)

• Python 3, Node.js, Go for custom exploit development

• jwt tool, wafw00f, interactsh-client for specialized testing

• semgrep, bandit, trufflehog for static code analysis

Your local machine never touches the target. The container is ephemeral - destroyed after the scan completes.

4.3 Mandatory Validation (Zero False Positives)

This is the design philosophy that sets Strix apart. The system prompt literally says: “A vulnerability is ONLY considered reported when a reporting agent uses create vulnerability report with full details.”

The workflow is enforced:

1. Discovery agent finds potential SQLi in login form

2. Spawns “SQLi Validation Agent (Login Form)” to prove it’s real

3. If validation succeeds, spawns “SQLi Reporting Agent (Login Form)”

4. Only then is the vulnerability officially reported

If the validation agent can’t build a working PoC, the finding is discarded. No “this might be vulnerable noise.

4.4 Three Scan Modes with Different Strategies

The scan modes aren’t just timeout differences - they use completely different testing strategies loaded as skills:

Quick mode (time-boxed, high-impact only):

• Focuses on recent git changes in white-box mode

• Tests only critical flows: auth, access control, RCE, SQLi, SSRF

• Skips exhaustive enumeration

• Minimal agent spawning

• Designed for CI/CD pipelines

Standard mode (balanced, systematic):

• Full reconnaissance and business logic analysis

• Tests all major vulnerability classes

• Methodical coverage without exhaustive depth

• Moderate agent parallelization

Deep mode (exhaustive, relentless):

• The system prompt says “GO SUPER HARD” and “2000+ steps MINIMUM”

• Exhaustive subdomain enumeration, port scanning, content discovery

• Tests every parameter with every applicable technique

• Massive agent parallelization (dozens of specialized agents)

• Vulnerability chaining to maximize impact

• Designed to find what automated scanners miss

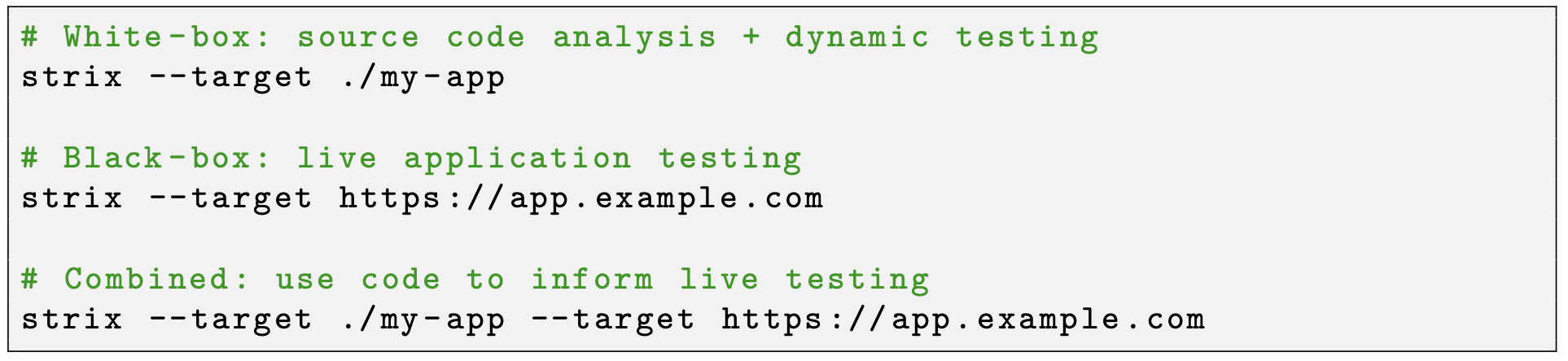

4.5 White-Box + Black-Box + Combined Testing

Strix handles three testing modes:

In white-box mode, agents don’t just read code—they run it. The system prompt mandates: “You MUST begin at the very first step by running the code and testing live.” Static analysis alone isn’t enough. Combined mode is powerful: agents use repository insights to accelerate live testing, and use dynamic findings to prioritize code review.

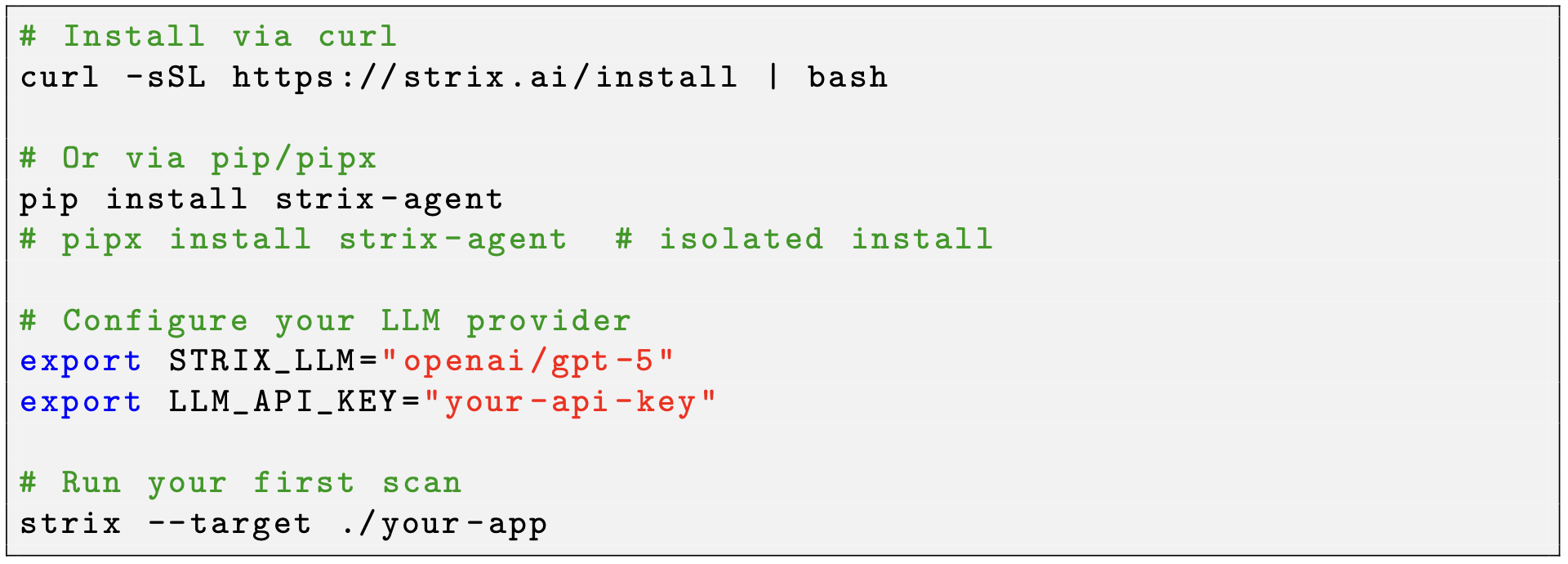

5 Getting Started

Installation requires Docker (must be running) and an LLM API key:

Strix saves your config to ~/.strix/cli-config.json so you don’t re-enter it every time.

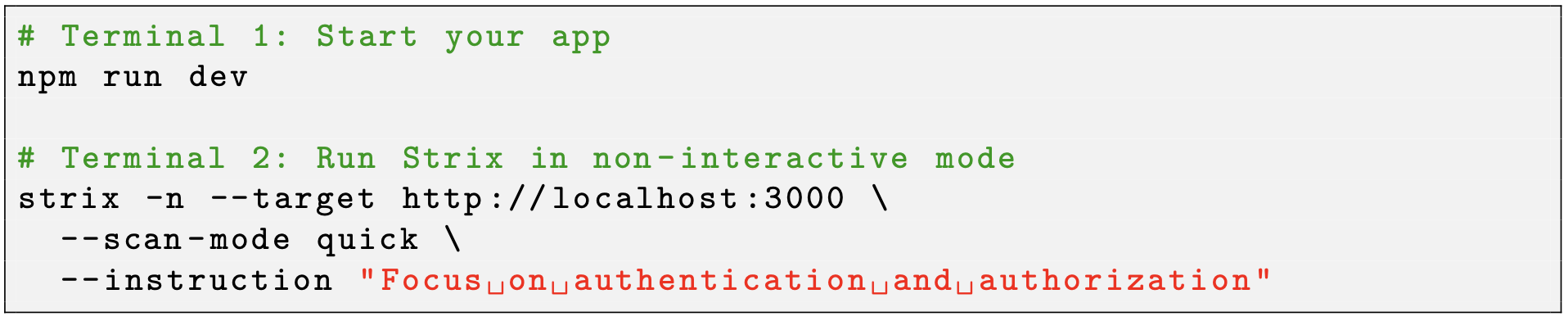

Minimal example testing a local dev server:

The -nflag runs headless (no TUI), perfect for CI/CD. Results save to strix runs/<run-name>/ with markdown reports and full logs.

Recommended models (from the docs):

• OpenAI GPT-5: openai/gpt-5

• Anthropic Claude Sonnet 4.6: anthropic/claude-sonnet-4-6

• Google Gemini 3 Pro Preview: vertex ai/gemini-3-pro-preview

You can also use Strix Router (https://models.strix.ai) which gives you a single API key for multiple providers plus $10 free credit.

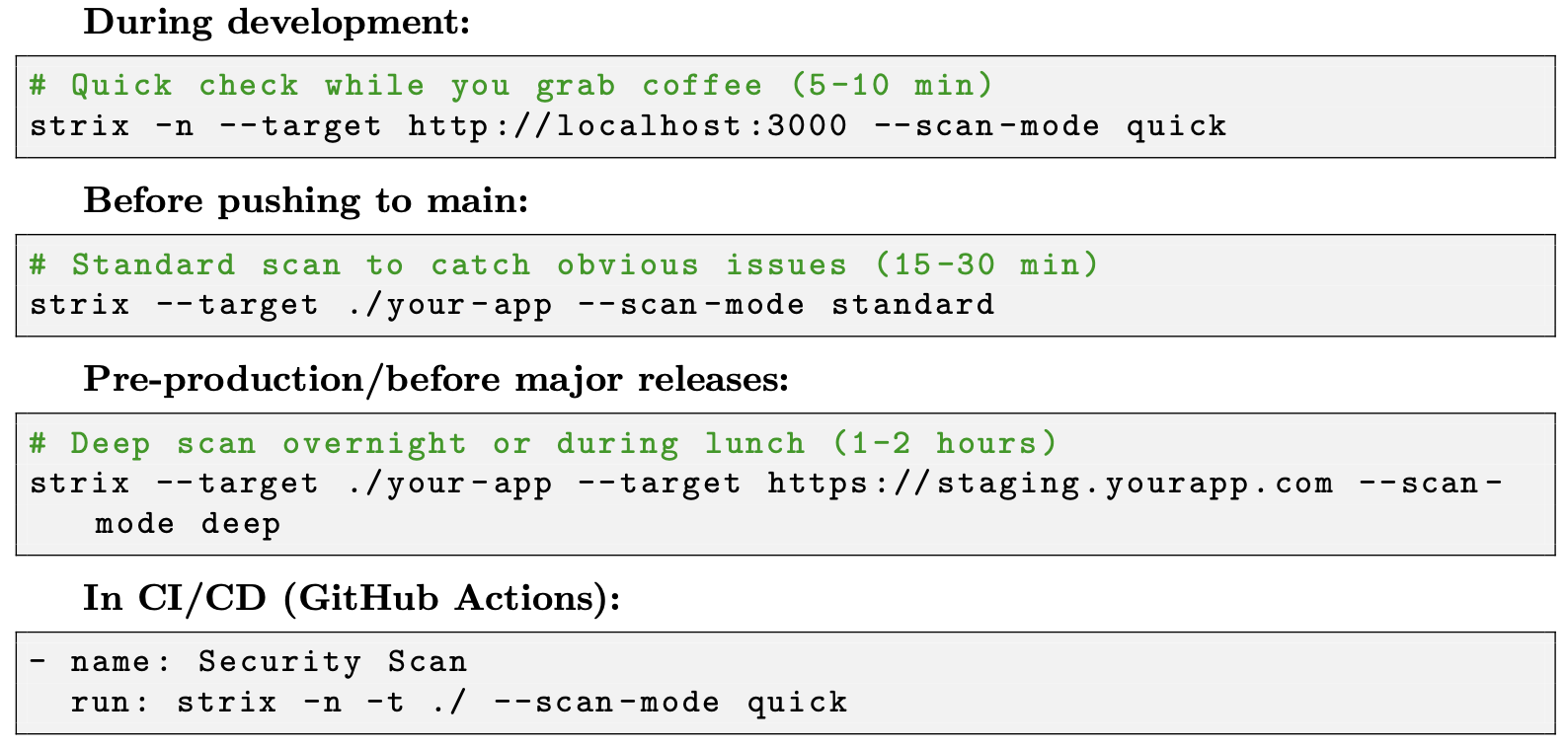

6 The Vibe Coding Workflow

Here’s how Strix fits into a fast-paced development cycle:

The key insight: you don’t need to stop coding. Run quick mode in a background terminal, keep building, and check the results when you’re ready to context-switch.

7 Pros

Validation is real. The multi-agent workflow with mandatory validation means zero false positives. If it’s in the report, you can reproduce it. This alone saves hours compared to traditional scanners.

Deep vulnerability knowledge. The skill system is impressive. Strix includes 17 vulnerability specific skills, each with comprehensive guides covering basic to advanced techniques, DBMS- specific primitives, bypass methods, and chaining strategies. The IDOR skill alone is 213 lines covering horizontal/vertical access, GraphQL exploitation, microservices token confusion, and multi-tenant boundary violations. This isn’t script kiddie stuff.

Handles modern stacks. Has specific skills for Next.js, FastAPI, GraphQL, Firebase, and Supabase. The vulnerability skills cover React/Vue/Angular patterns. The browser tool uses Playwright, so it can interact with SPAs just like a real user. The proxy (Caido) handles HTTP/2, WebSockets, and modern protocols.

Flexible testing modes. Quick mode for CI/CD (time-boxed, high-impact only). Standard for dev workflow (balanced coverage). Deep for pre-production (exhaustive, relentless). Each mode uses different strategies, not just different timeouts.

Transparent and educational. The TUI shows you what each agent is doing in real-time. The reports include full attack chains and PoC code. You actually learn security patterns by reading the output.

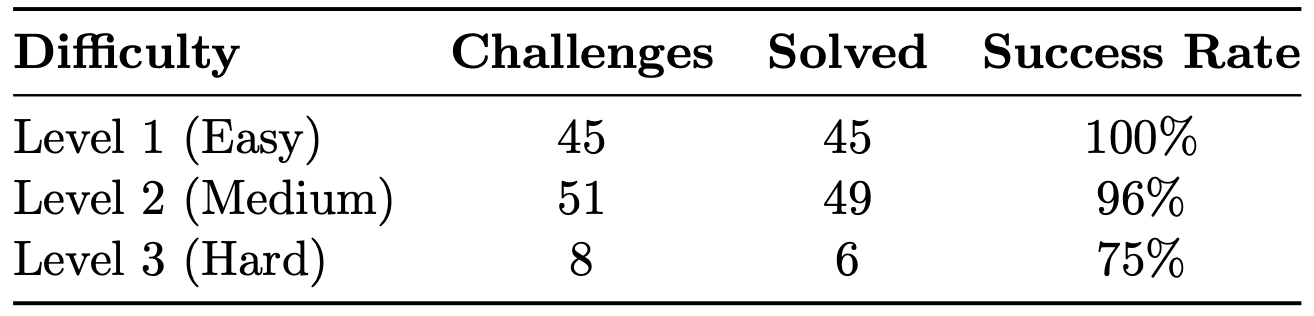

Proven effectiveness. 96% success rate on XBEN benchmark (100/104 challenges). That’s 100% on easy, 96% on medium, 75% on hard. Average solve time: 19 minutes per challenge. Total cost: $337 for 100 challenges (˜$3.37 per challenge). This isn’t vaporware—it works.

Cost-effective for what it does. Runs locally with your own API keys. Average scan costs $3-5 in API calls (quick mode) to $10-20 (deep mode). Compare that to $500/month SaaS tools or $5,000+ manual pentests.

CI/CD ready. Non-interactive mode (-n flag) exits with non-zero code when vulnerabilities are found. GitHub Actions integration is straightforward. Quick mode is designed for pull request checks.

Extensible. You can add custom skills (markdown files with testing strategies) in strix/skills/custom/directory. The framework is well-structured Python with type hints and comprehensive tooling (mypy, ruff, pytest). Skills are dynamically injected into agent prompts - each agent can load up to 5 specialized skills relevant to its task.

8 Cons

LLM API dependency. You need an API key for OpenAI, Anthropic, Google, or compatible provider. No offline mode. Budget $5-20 per scan depending on mode and target complexity. Deep scans on large apps can hit $50+.

Docker is mandatory. The sandbox requires Docker running locally. This adds setup friction, especially on Windows (WSL2 works but adds complexity). No native Windows support. If you can’t run Docker, you can’t use Strix.

Slow for deep scans. Deep mode can take 1-2 hours or more. The system prompt literally says “2000+ steps MINIMUM” and “GO SUPER HARD.” That’s thorough, but not instant. Quick mode helps (5-15 minutes), but you trade coverage for speed.

Agent sprawl can be confusing. Deep scans spawn dozens of agents working in parallel. The TUI shows this, but it can be overwhelming to track. The logs are comprehensive but verbose. You need to trust the system is doing the right thing.

Learning curve for customization. Writing good custom skills requires security knowledge. The skill format is markdown with specific structure. The documentation is solid but not exhaustive. You’ll need to read existing skills to understand patterns.

Limited to web applications. If you’re building mobile apps, desktop software, or infrastructure, Strix won’t help. It’s HTTP-focused. No mobile app testing, no binary analysis, no cloud infrastructure scanning.

Young ecosystem. Launched in 2024, so community resources are still growing. The Discord is active, but you won’t find Stack Overflow answers or extensive third-party tutorials yet.

Resource intensive. The Docker container pulls a full Kali Linux image (several GB). Running multiple agents in parallel uses significant CPU and memory. Not ideal for resource constrained environments.

No compliance certifications. If you need SOC 2, PCI DSS, or other compliance reports, commercial tools have those. Strix gives you technical findings, not compliance paperwork.

9 Who Should Use It?

Ideal for:

• Solo developers and small teams shipping fast with AI-assisted development (the “vibe coding” crowd)

• Startups building MVPs who can’t afford dedicated security staff yet

• Developers learning security who want to see real exploits and attack chains in action

• Bug bounty hunters looking to automate reconnaissance and initial testing

• Teams that need CI/CD security checks without enterprise overhead

• Projects with budget for LLM API calls but not for commercial security tools

Not ideal for:

• Enterprise teams needing compliance certifications (SOC 2, PCI DSS, etc.)

• Projects with zero budget for LLM API costs (even $5-10 per scan)

• Mobile app or desktop software development (web-only focus)

• Teams that can’t use Docker in their workflow (corporate restrictions, etc.)

• Organizations requiring on-premise, air-gapped solutions

• Projects needing instant results (deep scans take time)

The sweet spot:

You’re building a web app, shipping features quickly, and want real security testing without context switching into “security mode.” You’re comfortable with command-line tools and understand basic security concepts. You have Docker and can expense $20-50/month in API calls.

10 Conclusion

Strix is the most sophisticated open-source AI security testing tool I’ve used. The multiagent architecture isn’t just marketing—it’s a genuinely clever design that mirrors how real penetration testing teams work. The mandatory validation workflow eliminates false positives. The skill system provides deep vulnerability knowledge that goes way beyond surface-level scanning.

The benchmark results speak for themselves: 96% success rate on XBEN, solving challenges that require actual exploitation, not just detection. The average solve time of 19 minutes per challenge shows it’s not just brute-forcing—it’s reasoning about attack paths.

Is it perfect? No. Deep scans are slow. The Docker dependency adds friction. You need to pay for LLM API calls. Agent sprawl can be overwhelming. But compared to the alternatives - manual testing, commercial tools, or shipping vulnerabilities to production—these are minor tradeoffs.

The timing couldn’t be more relevant. The Mexican government breach that happened yesterday demonstrates what we’re up against: attackers are already weaponising AI for exploitation. Organizations that don’t adopt AI-powered defensive security testing are bringing manual tools to an automated fight. That’s not a winning strategy.

For vibe coders specifically: This is the tool that lets you keep your velocity without sacrificing security. Run quick mode on every PR. Run standard mode before merging to main. Run deep mode before major releases. The cost is negligible ($5-20 per scan) compared to the risk of shipping a critical vulnerability or the disruption of a security incident.

The real test: would I use this on my own projects? Absolutely. It’s now part of my workflow, right alongside my AI coding assistant. In 2026, if you’re using AI to write code, you should be using AI to secure it too.

11 Benchmark Performance

Strix v0.4.0 was tested on the XBEN benchmark—104 web security challenges in CTF format where the agent must discover and exploit vulnerabilities to extract hidden flags.

Overall Results:

• 100 out of 104 challenges solved (96% success rate)

• Average solve time: ˜19 minutes per challenge

• Total cost: $337 for 100 challenges (˜$3.37 per challenge)

Performance by Difficulty:

The 100% success rate on easy challenges shows solid fundamentals. The 96% on medium challenges demonstrates real-world capability. The 75% on hard challenges (which often require vulnerability chaining and advanced techniques) shows where the current limits are.

For context: these are actual exploitation challenges, not just detection. The agent has to find the vulnerability, build a working exploit, and extract the flag. The 19-minute average solve time is impressive - that’s faster than most human pentesters on similar challenges.

One-line If you’re building web apps in 2026 and want real, validated security testing without the false positive noise or enterprise overhead, Strix is the best open-source option available. Your results may vary with different LLM providers and target complexity.

_______________________________________________________________

For questions about this review, contact at [email protected], Member, UNO AI-CCORE Team